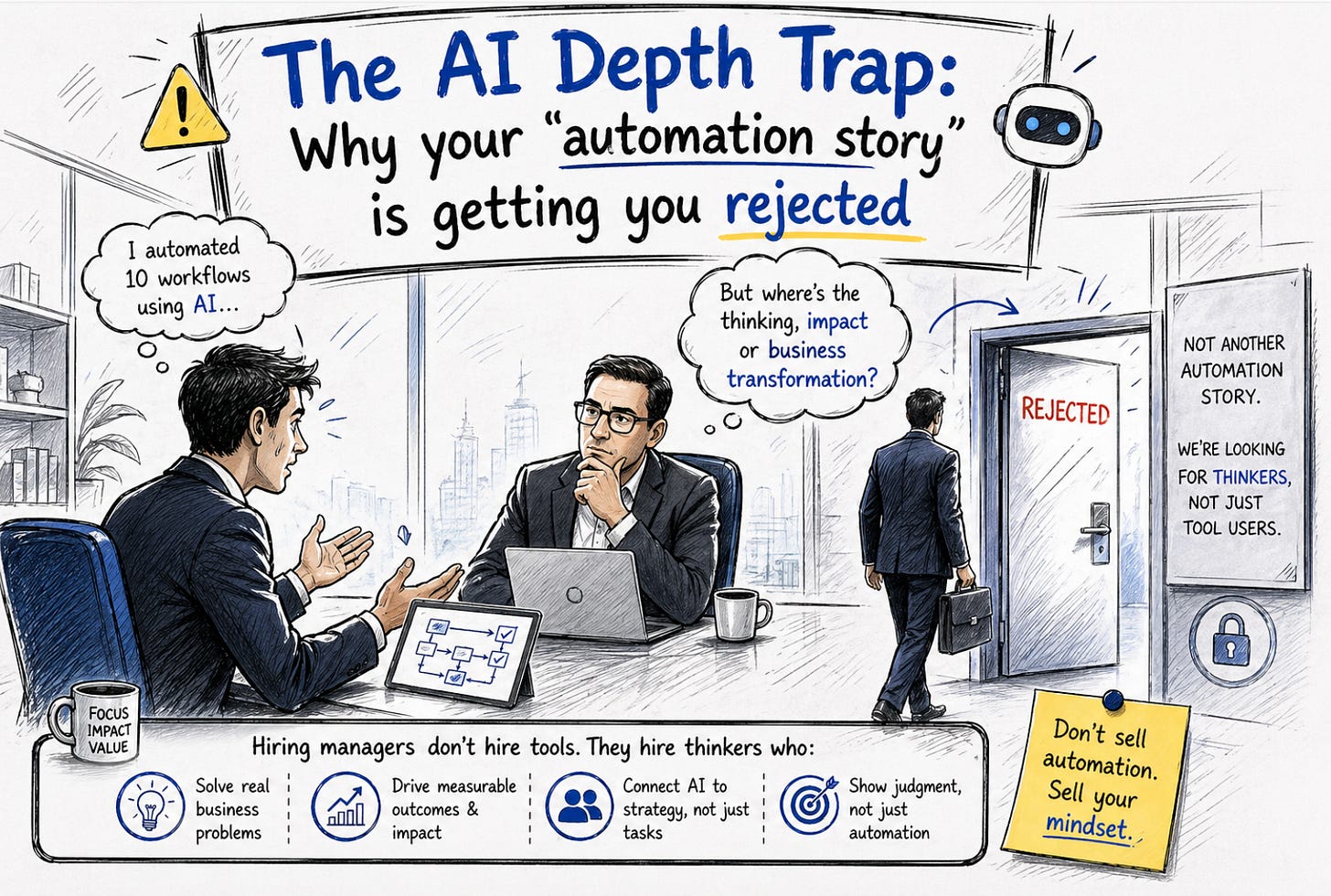

The AI Depth Trap: Why your "automation story" is getting you rejected

If your interview answer stops at "Efficiency," you’re signaling that you’re a user, not a leader.

A candidate sat across from me recently, beaming with confidence. “I’ve integrated AI into everything,” he said. “Backlog generation, sprint summaries, automated reports... my team’s velocity has never been higher.”

He paused, waiting for me to be impressed.

Instead, I asked one question: “What did you have to throw away because the AI got it wrong?”

Silence.

He went back to explaining the tool’s features. In that moment, the “Senior” label I had mentally placed on him started to peel off. He had fallen into the AI Depth Trap.

Most 12-year professionals are currently trying to “sound relevant” by mentioning AI everywhere. But to a hiring panel, hearing “I use AI for user stories” is like a pilot saying, “I use the autopilot button.” We know the button exists. We want to know if you can fly the plane when the sensors fail.

The shift from “Usage” to “Judgement” is where the promotion lives.

💡 The Weekly Intel:

If you’re finding it hard to shift from “Task-talk” to “Strategy-talk” in your interviews, you’re not alone. I’ve built a library of scripts for exactly this transition.

📥 Download your free weekly Agile Interview Q&A Set here →

I’ve sat on enough panels to see the pattern.

The Mid-Level Answer: “AI helped us move 30% faster.”

The Senior-Level Answer: “AI helped us move faster, which actually created a new risk: False Clarity. We had to redesign our refinement process to catch the edge cases the model was hallucinating.”

See the difference? The second person isn’t talking about a tool; they are talking about Systemic Risk.

I remember a PO who stood out because she didn’t start with the AI. She started with the mess.

“We had inconsistent story quality slowing down the devs,” she said. “We used AI to create a baseline, but we realized that if we relied on it too much, the team stopped thinking. So, I implemented a ‘Human-in-the-loop’ check specifically for dependencies.”

She wasn’t describing a feature. She was describing Judgement.

The irony of the AI era is that the more the tools do the “work,” the more the interviewers value your Skepticism.

If your answer suggests that the AI always works perfectly, you aren’t showing confidence—you’re showing a lack of experience.

Real depth comes from showing where the tools were unclear:

“It gave us options, but not decisions.”

“It improved speed, but threatened alignment.”

“It drafted the stories, but it didn’t understand our domain.”

I’m curious—what’s the biggest “hallucination” or mistake an AI tool has made in your workflow this month? How did you catch it? Hit reply and let’s talk about the ‘Human’ side of the loop. I read every response.

🚀 Ready to find your “ Voice”?

Most professionals have the experience, but they lack the “Executive Vocabulary” to close the deal in a 45-minute interview. Don’t go in with surface-level answers.

Don’t prepare “AI answers.” Prepare stories of Governance. Because in a world where everyone has the tool, the only differentiator left is your ability to know when to turn it off.

AI will get you the interview. Your awareness of its limitations will get you the job. Stop sounding like a user. Start sounding like the person who understands the impact.

Weekly Interview Intel | Set #01

Topic: Governing AI (Moving from “User” to “Director”)

The Question: “We’re seeing teams use AI to speed up backlog creation, but the quality is inconsistent. How would you ensure AI improves our throughput without sacrificing the technical depth of our stories?”

The “Standard” Answer (Avoid This):

“I would create a prompt template for the team to ensure all stories have Acceptance Criteria and a clear ‘Why.’ Then, I’d run a training session to show everyone how to use ChatGPT more effectively to save time.”

Why this fails: It focuses on features and training. It sounds like a Coordinator. It assumes the tool is the solution rather than a potential source of “False Clarity.”

The “Senior-Level” Answer (Use This):

“I treat AI as a ‘Drafting Clerk,’ not an ‘Author.’ To maintain depth, I implement a Three-Step Validation Loop:

The Context Guard: Before using AI, the PO must document the ‘Unique Domain Constraint’—the one thing the AI won’t know about our specific architecture.

The Anti-Hallucination Review: During refinement, the team is tasked with finding one ‘Generic Assumption’ the AI made that doesn’t apply to our edge cases. We don’t move forward until that’s identified.

The Accountability Pivot: I make it clear that the AI didn’t ‘write’ the story; the Human Lead did. If a story lacks depth, we don’t blame the tool—we revisit the validation process.

This ensures we get the 30% speed boost of drafting without the 50% technical debt of ‘Generic’ stories.”

The Logic (Why it works):

It demonstrates “Human-in-the-loop”: You aren’t just letting the tool run wild; you are governing it.

It uses “Executive Vocabulary”: Terms like ‘Unique Domain Constraint’ and ‘Anti-Hallucination Review’ signal that you understand the risks.

It highlights Judgement: You are showing that you prioritize Technical Depth over Velocity, which is what Directors care about.

The “Pro” Script for the Interview Follow-up:

If they ask, “Isn’t that extra process slowing the team down?”

Say this: “It’s a trade-off. We can move fast and break things in a sandbox, but in a production backlog, Speed without Accuracy is just expensive noise. I’d rather spend 5 extra minutes in refinement than 5 days in a botched sprint because of a ‘Generic’ AI story.”

Ace Every Interview Question — With Real Answers That Work

If you’re preparing for Scrum Master, Agile Coach, or Product Owner interviews, this is your go-to prep pack. New set every week!

📥 Download your free Agile Interview Q&A Set here →

Why Subscribe

Each week, I share battle-tested strategies, messy lessons, and practical tools that help Scrum Masters, Product Owners, and change agents like you make sense of chaos — without sugar-coating it.

If you found this useful, subscribe.

This isn’t theory. It’s real work, made a little easier — one step at a time.

“Just because I understand it, does not mean everyone understands it. And just because I do not understand it, does not mean no one understands it.”